Seeing conspiracy theorists everywhere as a conspiracy paradox

Concerns about the consequences of conspiracy theories have grown. Widespread belief in unfounded conspiracy theories is only one associated risk. Conversely, academics mustn’t commit the reverse error, in adopting a Protective Conspiracy Framing and labelling credible theories and proposals conspiracies when these would deserve scientific scrutiny.

introduction

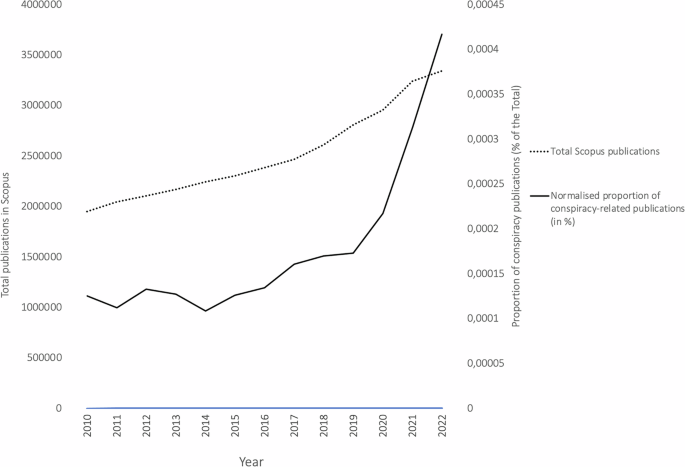

The terms ‘conspiracy’ and ‘conspiracy theory’ refer to secret plots by powerful and malevolent groups to manipulate important events. In recent years, in terms of both publicity and publication, conspiracy theories have received increasing media and scientific attention, particularly since the election of President Trump in 2016 and the onset of the COVID-19 pandemic. Searching for the term “conspiracy” in the article title, abstract and keywords in Scopus reveals a steady and substantial increase in scholarly attention over the past decade. As Fig. 1 shows, the number of publications referencing conspiracy-related terms has grown consistently since 2010, accelerating markedly from 2019 onwards. While the overall volume of scientific publications1 has increased too, field-normalised data show that work related to conspiracies has grown at a disproportionately higher rate. Specifically, such research accounted for 0.0173% of all indexed Scopus publications in 2019 compared to 0.0417% in 2022, a relative increase of around 175%. This suggests that conspiracy theories are a growing concern in public discourse and a rapidly expanding area of empirical research and theoretical reflection across disciplines.

This growing academic interest reflects not only the societal relevance of conspiracy beliefs but also the evolving ways in which the concept of conspiracy is being deployed across disciplines. In this Comment, I offer a brief theoretical proposal that aims to capture a less-recognized but increasingly prevalent form of conspiracy thinking, one that may affect academics and scientists themselves, regardless of field. Beyond the two widely accepted categories: (1) genuine conspiracies, which refer to historically and legally validated covert actions and (2) conspiracy theories as unproven or speculative beliefs, I suggest a third, distinct use of the conspiracy label: Protective Conspiracy Framing, which reflects the rhetorical dismissal of opposing or untested hypotheses as conspiracy thinking.

The standard duality between real conspiracies and conspiracy theories

Scholarly literature commonly distinguishes between two principal uses of the term conspiracy. The first refers to real (proven) conspiracies, involving covert actions and intentional deception by powerful actors that have been historically or legally substantiated. Examples include the Watergate scandal and the Dreyfus Affair, historical cases involving deliberate institutional deception, later confirmed through legal proceedings and documentary evidence. These conspiracies are not merely suspected but validated retrospectively by thorough investigations, making them distinct from speculative or unfalsifiable conspiracy theories.

The second, more psychologically oriented category, concerns conspiracy theories or beliefs: epistemically unverified beliefs that significant events are secretly orchestrated by malevolent groups. Typical examples include claims that the 1969 moon landing was faked, that COVID-19 vaccines contain microchips for surveillance via 5G networks, or that climate change is a fabricated narrative2. According to psychological models3, such beliefs often flourish in contexts of uncertainty, anxiety, or perceived powerlessness, where individuals seek cognitively coherent but empirically ungrounded explanations.

Belief in conspiracy theories can be understood as a tendency toward false positives, where a pattern or hidden intention is perceived in the absence of confirmatory evidence. Much like a researcher who commits a Type 1 error and incorrectly rejects a true null hypothesis, the conspiracy theorist sees secret intentions or deception where none has been reliably demonstrated, or even examined.

From Type 2 error to conspiracy framing through premature rejection

Yet, possibly in response to the proliferation of Type 1 conspiracy theories, another epistemic error exists, using similar processes but operating in the opposite direction: a reflex to dismiss and to label uncomfortable or deviant ideas or hypotheses as conspiracy theories, even in the absence of clear evidence of irrationality or mistake. I call this Protective Conspiracy Framing or Type 2 conspiracy. Protective Conspiracy Framing aligns with Type 2 errors: rejecting untested or unfalsified hypotheses. Protective Conspiracy Framing can be understood as a cognitive and rhetorical overreaction, where labelling an argument as a “conspiracy theory” may function as a social signal, potentially serving to uphold dominant norms, ideological orthodoxy, or institutional trust.

This pattern favours conformity and stability over truth-seeking, especially in high-trust environments where deviation may be seen as destabilizing or dangerous. Here, a hypothesis is rejected without falsification because it appears suspicious, unconventional, or politically incorrect. Ironically, such rejection often mirrors the structure of conspiracy belief: attributing hidden, malicious motives or ignorance to opponents without engaging the actual substance of their claims or arguments.

A contemporary version of an old phenomenon

Similar rhetorical strategies have long been recognized. For instance, philosopher Arthur Schopenhauer noted in The Art of Being Right that arguments are sometimes dismissed not by refuting them, but by associating them with ideas that are already socially discredited4. In modern discourse, this means labelling critics as “conspiracy theorists”, “denialist”, “anti-science” or “extremists”, thus ending the debate not through reason or argument, but through social delegitimization. A similar rhetorical pattern is captured by Godwin’s Law, an adage of internet culture stating that the longer an online discussion continues, the more likely it is that someone will be compared to Hitler or the Nazis5. While anecdotal in nature, the meme illustrates how rhetorical escalation can supplant substantive engagement. Applied to conspiracist category, these strategies risk having a dissuasive effect: they restrain critical thinking in politically or socio-emotionally charged contexts, particularly when institutional narratives dominate and nonconformity is stigmatized. This may be because authoritarianism and conformism/conventionalism increase in uncertain times, when the world is perceived as unstable and dangerous6.

The consequences of epistemic overcorrection

While addressing misinformation or conspiracy beliefs is essential, overusing the term ‘conspiracy’ can undermine the epistemic openness vital to both rigorous scientific enquiry and democratic debate. A recent example concerns the origins of COVID-19. In February 2020, leading scientists published statements in The Lancet condemning “conspiracy theories suggesting that COVID-19 does not have a natural origin”7. Although well-intentioned, aimed at protecting Chinese researchers, or preventing xenophobia and politicized misinformation, these early declarations may have prematurely closed off legitimate investigation. Such positioning arguably contributed to a stigmatization of opposing hypotheses, particularly the lab-leak theory, which remains scientifically plausible8. In this way, the reaction to potential conspiracies mirrored conspiracist logic: using suspicion of intent (e.g., “those proposing the lab-leak are engaging in conspiracy”) to delegitimize alternative perspectives in the name of scientific responsibility9. Media echoed this framing, as exemplified by headlines like “The rush to find a conspiracy… is driven by narrative, not evidence”10. This phenomenon perfectly illustrates Protective Conspiracy Framing: a rhetorical and cognitive tendency to discredit as a conspiracy theory non-mainstream views in the name of consensus or stability. While intended to uphold scientific credibility, Protective Conspiracy Framing can marginalize whistleblowers, critical scholars, or investigative journalists, not due to lack of merit, but because their claims challenge dominant narratives.

Suspicion in reverse and the logic of defensive framing

A paradox emerges: by readily labelling non-conforming perspectives as conspiracies, Protective Conspiracy Framing may unintentionally mirror the cognitive structure of conspiracy thinking, though in reverse. While classic conspiracy theorists suspect hidden malevolence behind official narratives, Protective Conspiracy Framing attributes problematic intent to dissenting critiques, presuming them to be irrational, dangerous, or strategically misleading. Both rely on unverified assumptions and epistemic suspicion, framing opposition as ideologically harmful rather than evaluating arguments on their merits. Drawing on the literature on conspiracy theories, I outline two pathways for understanding Protective Conspiracy Framing, reflecting the established distinction between misinformation (unintentional) and disinformation (intentional)11. In its unintentional form, Protective Conspiracy Framing may be driven by cognitive dissonance reduction in an effort to restore psychological coherence when confronted with unsettling or ambiguous claims. By aligning strongly with perceived consensus and delegitimizing alternatives, individuals may protect their sense of epistemic security and social identity. In its intentional form, Protective Conspiracy Framing may act as a rhetorical tool aimed at marginalizing dissent under the pretext of defending truth, democracy, science or public trust and social cohesion. While not necessarily conspiratorial in intent, Protective Conspiracy Framing risks replicating key epistemic patterns found in conspiracy thinking: attributing hidden agendas to dissenters, prematurely discrediting alternative hypotheses, and treating deviation as deviance. This, in turn, can weaken the open enquiry and epistemic humility on which scientific and democratic processes rely.

Toward a balanced epistemology

To safeguard both democratic discourse and scientific progress, we must resist the two epistemic extremes: the overproduction of conspiracy beliefs (Type 1 errors) and the premature dismissal of non-conforming perspectives (Type 2 errors). The concept of Protective Conspiracy Framing offers a new lens to explore the latter. It invites researchers and institutions to examine not only how people fall to Type 1 conspiracies, but also how the fear of being labelled a conspiracy theorist can stand in the way of legitimate divergence. Recognizing this cognitive and rhetorical pattern is essential for cultivating a culture of critical thinking, epistemic modesty, and intellectual pluralism. Just as we study how people can easily and wrongly believe too much, we also need to understand how and why people can wrongly believe too little and dismiss things too quickly.

References

-

National Science Foundation. Publication Output by Region, Country, or Economy and by Scientific Field. https://ncses.nsf.gov/pubs/nsb202333/publication-output-by-region-country-or-economy-and-by-scientific-field (accessed 12 June 2025).

-

Douglas, K. M., Sutton, R. M. & Cichocka, A. The psychology of conspiracy theories. Curr. Direct. Psychol. Sci. 26, 538–542 (2017).

-

van Prooijen, J.-W. An existential threat model of conspiracy theories. Eur. Psychol. 25, 16–25 (2020).

-

Schopenhauer, A. (1831/2004). The Art of Always Being Right: Thirty-Eight Ways to Win When You Are Defeated. Dover Publications.

-

Godwin M. (1994) Meme, counter-meme. Wired, October 1. Available at: https://www.wired.com/1994/10/godwin-if-2/

-

Osborne, D., Costello, T. H., Duckitt, J. & Sibley, C. G. The psychological causes and societal consequences of authoritarianism. Nat. Rev. Psychol. 2, 220–232 (2023).

-

Calisher, C. et al. Statement in support of the scientists, public health professionals, and medical professionals of China combatting COVID-19. Lancet 395, e42–e43 (2020).

-

Kaiser, J. (2024, December 3). House panel concludes COVID-19 pandemic came from lab leak. Science. Retrieved January 21, 2025, from https://www.science.org/content/article/house-panel-concludes-covid-19-pandemic-came-lab-leak

-

Calisher, C. H. et al. Science, not speculation, is essential to determine how SARS-CoV-2 reached humans. Lancet 398, 209–211 (2021).

-

Ling H. The lab leak theory doesn’t hold up. The rush to find a conspiracy around the COVID-19 pandemic’s origins is driven by narrative, not evidence. June 15, 2021. https://foreignpolicy.com/2021/06/15/lab-leak-theory-doesnt-hold-up-covid-china/ (accessed June 15, 2021).

-

Kużelewska, E. & Tomaszuk, M. Rise of conspiracy theories in the pandemic times. Int. J. Semiotics Law 35, 2373–2389 (2022).

Acknowledgements

The author would particularly like to thank his colleagues at the interdisciplinary, interuniversity think tank, “CovidRationnel”, of which he is a member. The think tank has broadened his understanding of how society and academia function over the last five years.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The author declares no competing interests.

Peer review

Peer review information

Communications Psychology thanks the anonymous reviewers for their contribution to the peer review of this work. Primary Handling Editor: Marike Schiffer. A peer review file is available. Primary Handling Editor: Marike Schiffer. A peer review file is available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Vermeulen, N. Seeing conspiracy theorists everywhere as a conspiracy paradox.

Commun Psychol 3, 115 (2025). https://doi.org/10.1038/s44271-025-00298-3

-

Received: 28 May 2025

-

Accepted: 16 July 2025

-

Published: 28 July 2025

-

DOI: https://doi.org/10.1038/s44271-025-00298-3