In 2020, FiveThirtyEight published a chart that was weaponized to spread election disinformation. It still haunts me.

The morning after Election Day in 2020, FiveThirtyEight — the now-defunct political data site, where I worked at the time as a politics writer — published a chart on our liveblog. Within hours, it was being circulated online as supposed proof of election fraud, detached from its context and used to fuel conspiracy theories. It has haunted me ever since.

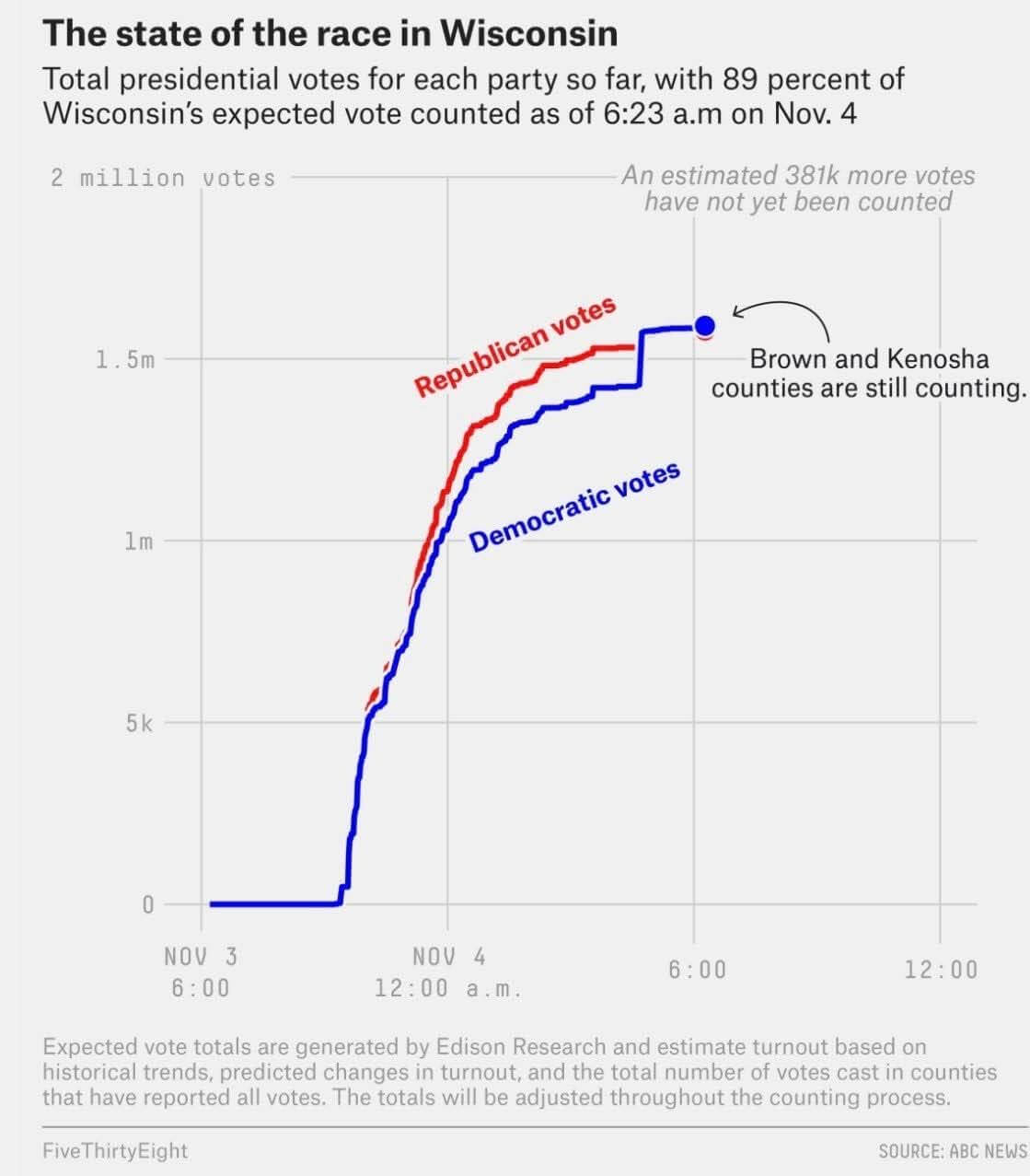

The chart showed the vote totals over time for Wisconsin. At first glance, it appeared that Joe Biden was lagging far behind Donald Trump and then suddenly surged ahead to a tie. Almost immediately, people online seized on the image, claiming that all those Biden votes were actually evidence of fraud. In fact, it was nothing of the sort, and both Trump and Biden’s votes had increased — the result of a large batch of absentee and mail ballots being counted all at once.

“I remember panicking,” said Laura Bronner, who was FiveThirtyEight’s quantitative editor at the time and made the chart. (Bronner is now a senior applied scientist at the Public Policy Group and Immigration Policy Lab in Zurich.) “I remember having conversations about, ‘What do we do given that this is being misinterpreted?’”

Despite the best efforts of our team and others to fact-check and add context, the chart took on a life of its own — one that has outlived FiveThirtyEight itself. As a reporter who covered disinformation and conspiracy theories, I kept encountering it long after the election was over. Misinterpreted and split from its original context, it became more than just a piece of disinformation and is now a symbol of a broader conspiracy theory.

This chart, published by FiveThirtyEight on Nov. 4, 2020, shows vote totals over time in Wisconsin. Detached from its context, it was widely misrepresented online as evidence of election fraud. (Courtesy: Kaleigh Rogers)

I wanted to understand how that happened, and whether there was anything we could have done differently. It’s a singular example that represents a broader problem challenging journalists, particularly in the era when artificial intelligence allows anyone to easily manipulate our work. How can we protect our reporting from being used to mislead? Is it even possible?

To truly understand what happened, it helps to remember what the fall of 2020 looked like. (I know, nobody wants to relive that time.) Vaccines were not yet available, masking was common, and a new wave of COVID-19 cases was starting to surge as the cooler weather set in. Public health restrictions varied widely state by state, and Americans’ views of these limitations were divided neatly along party lines.

The pandemic had drastically changed the election, too. Most states expanded access to mail-in and early voting. Experts predicted these changes would result in a record-high voter turnout. They were right: 17 million more Americans voted in 2020 than did in 2016, the largest ever increase in voters between two presidential elections on record.

But just like other pandemic measures, support for these voting changes was also divided along party lines. President Trump and his administration were pushing back against mail-in voting, warning — without evidence — that it was rampant with fraud and urging voters to cast their ballots in person on Election Day.

That dynamic made it easy for election analysts, like my colleagues at FiveThirtyEight, to predict the shape of the results. For one, it was likely we wouldn’t know the winner on election night — the sheer number of voters combined with how close Trump and Biden were polling suggested it could be many days until we knew the results.

We also knew that the lead could shift over time. In-person, Election Day ballots are typically counted first, while absentee ballots from early or mail-in voting are typically tallied afterwards. Since more Republicans planned to vote in person and more Democrats planned to vote by mail, that meant early results were expected to skew in favor of Trump until the rest of the votes were tallied.

FiveThirtyEight and many other news outlets attempted to prepare readers for this ahead of time, an approach sometimes referred to as “prebunking.” But this only fueled those who were bought into Trump’s claims of fraud, citing it as evidence of how the “steal” was about to unfold.

This was the heated, tense and uncertain environment in which we were liveblogging when we published the chart. We had already posted similar visuals showing how vote counts changed as more tranches of ballots were counted in swing states. This one reflected a large dump of absentee ballots from Milwaukee, added to the data on the morning of Nov. 4. Cities have lots of people and also tend to lean Democratic, so this added a significant number of votes for Biden — as well as thousands for Trump — closing the gap between the two candidates.

But social media users and right-wing influencers quickly seized on the chart, claiming it showed Biden alone gaining votes and that “vote dumps” to swing the election were happening in the dead of night.

FiveThirtyEight moved quickly to respond. We published multiple blog and social media posts to provide more context and explain what the chart actually showed. We published a video with Bronner walking through it. Other outlets, including PolitiFact, debunked the claims that this was evidence of fraud, too.

But it was too late. As the old adage goes, a lie gets halfway around the world before the truth has a chance to get its pants on. The chart continued to circulate as evidence of the stolen election conspiracy that Trump pushed for months. Over time, stripped of all context, it became a meme, a symbol of the fraud many Americans still believe occurred in 2020. T-shirts with a simplified (and inaccurate) version of the chart are still sold online, sometimes styling the two lines into the “F” of the word “Fraud.” I saw a self-reported conspiracy theorist sporting one in a video just last month.

To understand how this happened to this particular chart, I spoke to experts who study the spread of disinformation. A few factors worked against us at once. The biggest one may have been the election itself, which was unusual in many ways, and people weren’t wrong to notice that. But Trump had also spent months sowing doubt and priming his supporters to see anything out of the ordinary as evidence of fraud.

“Throughout the election cycle, they had been hearing that the election was going to be stolen,” said Renée DiResta, a social media researcher and a professor at Georgetown University. “So evidence was picked to fit the frame that they had been hearing. They had been told to expect a steal. Then President Trump lost and they looked for things they could recast as suspicious.”

The chart was far from the only piece of “evidence” seized upon by Trump and his supporters. Claims about Sharpies used on ballots in Arizona, a misunderstanding of how birthdates for same-day registrations are entered in Michigan and other routine election procedures were similarly recast in a suspicious light and contributed to the election fraud conspiracy frenzy.

There was also a structural problem: We published a visual chart, not a piece of written text. Once it was stripped of context, that put us at a disadvantage. Visualizations pose two challenges. Many people are not trained to read charts, even simple ones, and can easily misinterpret them. At the same time, charts and data carry an aura of objectivity that lent credibility to the inaccurate representation of ours. “Research on how effective fact checking is not completely settled on these more complicated modalities of things like visualizations or statistics,” said Maxim Lisnic, a Ph.D. student at the University of Utah who is studying disinformation in visualizations on social media. “Data is just seen as so objective, right? A lot of people do resist further nuanced explanations, because they say, ‘Well, the chart’s there. The data is telling me something.’”

Lisnic co-authored a study in 2023 investigating how social media users use charts to mislead. The researchers suspected users might be more likely to seize on charts that violated basic design principles that made them more misleading to begin with. Instead, they found users were just as happy to use good-faith data visualizations like ours to support misinformation, employing logical fallacies or misreading the data to misrepresent what they actually show.

So, is there anything we could have done differently?

Lisa Fazio, a psychology professor at Vanderbilt University who studies how to correct false beliefs, suggested journalists can try running through possible scenarios before hitting publish and asking a simple question: How might this be misread? In this case, that may have prompted us to put the red Trump line in front so it wasn’t hidden by Biden’s blue line, she said.

Lisnic’s research similarly found that small tweaks like this are often enough to undercut misinformation. His team also suggested that data visualizations include as much information about caveats or uncertainty as possible.

But FiveThirtyEight was already aware of a lot of these best practices. That’s why our chart noted that two large counties were still counting votes. Looking back, it’s difficult to know if any tweaks would have been sufficient to prevent our work from being used to support the claims of fraud.

“I do obviously think that how work is going to be interpreted is an important thing to keep in mind. I think what is hard is to guard yourself against willful misinterpretation and bad-faith attempts at misusing information you put out there,” Bronner said. “There is no way to put out information without putting out information that could potentially be misused.”